Considerations in Particle Sizing – Part 2: Specifying a Particle Size Analyzer

In Part 1 we stated that the aim is to provide a pathway through the decision-making process of choosing a particle sizing analyzer by means of asking and answering three general questions:

- How do I classify the various techniques?

- How do I set specifications (quantitative or qualitative)?

- Which technique(s) have the best chance of solving my problems?

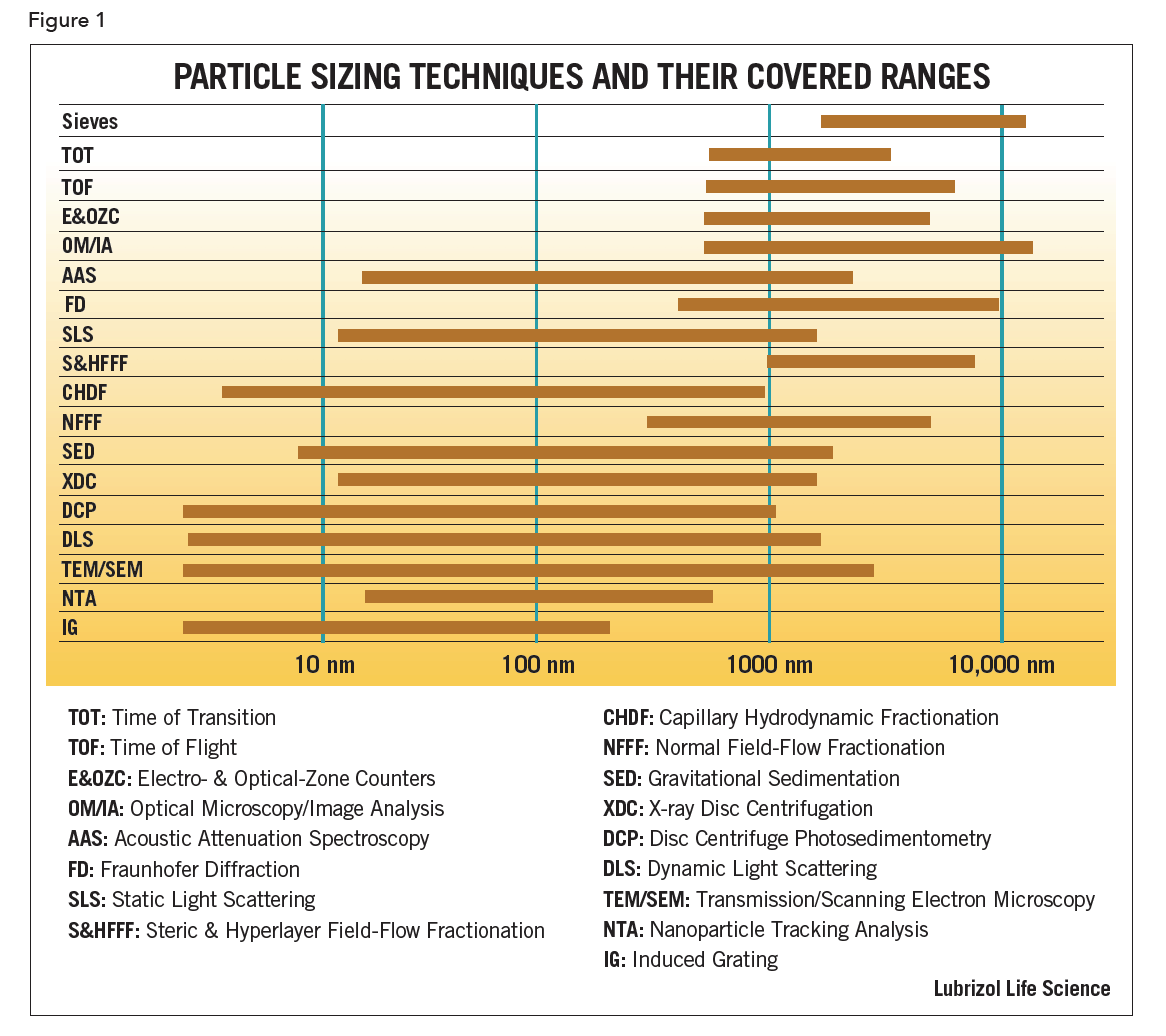

We started by classifying the different particle sizing techniques in four ways: (i) size range, (ii) degree of separation (i.e., fractionation), (iii) imaging vs. non-imaging methods and (iv) weighting: intensity, volume, surface and number.

Information Content

A fifth way to classify a particle sizer is by information content. This final major classification revolves around the amount of information required to solve a problem. There are two key questions to ask that determine which techniques are useful.

- What do you want?

Averages, widths, tables & graphs, etc.

- How will you use it?

Process control, QC or R&D applications

If all that is needed is an average particle size, then a single-moment instrument is sufficient. For average length and width, an ensemble averaging instrument will suffice. However, the more information needed, the more resolution that is required. But caveat emptor regarding the “zero-to-infinity” trap set by over-hyped marketing claims made for many instruments.

Answering the first question, “What do you want?”, may not be easy but often follows from the answer to the second question. For example, in most process control environments, varying a single parameter is reasonable, but varying multiple parameters is difficult. In this case, opt for one piece of information, which might be 90% of particles less than a stated size. For QC, an average and a measure of distribution width is often sufficient, though sometimes the second piece of information is nothing more than a spec such as d90 < 2 µm. Generally, only in an R&D environment is it useful to consider asking for more information. Additional size distribution information, often hard to come by reliably, might be the skewness of a single, broad distribution. It could also be the size and relative amounts of several peaks in a multi-modal distribution or the existence of a few particles at one extreme of a distribution. Where the distribution has several, closely-spaced features, a true high-resolution technique is an imperative.

Specifications

Specifications are of two types: quantitative and qualitative.

Quantitative

Specifications of this type comprise size range, throughput and definitions: accuracy, precision, reproducibility and resolution.

Size Range

This was discussed in Part 1 in the section on Classification of Techniques (Part 1 – Figure 1).

Throughput

The novice often mistakenly assumes that the measurement duration is sufficient to characterize the typical time per sample. Sometimes the measurement duration is only a fraction of the actual time per cycle. Throughput is the total sum over all the following: sample preparation, analysis, data reduction/printout/interpretation and cleanup.

Throughput is probably most important to a QC laboratory where, often, large numbers of samples must be run in one day. Speed of analysis is sometimes a major consideration even for one measurement in process control applications. Sample preparation may be as short as a few minutes or require overnight. Warm-up, calibration or instrument adjustment all add to the overall time. Generally, with most modern instruments, the actual measurement or analysis time can be short. Yet, for broad distributions, sieving and sedimentation techniques (including field-flow fractionation) are relatively slow compared to most forms of light scattering. Single particle counting (SPC) is fast for narrow distributions but can be slow for broad distributions. Data reduction and printout are fast given modern computers. The time to interpret the data depends on the analyst and what criteria have been set. Cleanup time is often seriously underestimated.

Finally, it is wise to consider whether a fast measurement or analysis time is worth it if the sum of all the other times is considerable. If the total throughput time is not much different a higher resolution but slower technique is a better choice.

Definitions

Accuracy is a measure of how close an experimental result is to the “true” value. For irregularly shaped particles, techniques that cannot be calibrated, or any other set of conditions where a “true” value is unknown or not well defined, accuracy has no meaning. For spheres and other simple shapes, accuracy can be established by comparison between several techniques. Surprisingly, below one µm, absolute accuracy is typically no better than 3%.

Precision is a measure of the variation in repeated measurements under the same conditions (instrument, sample, and operator). Accuracy (associated with systematic error) and precision (associated with random error) are related: the results of many measurements may group tightly together (high precision, low random error) but the mean of the group may be far from the true value (low accuracy, high systematic error). However, if a measurement is highly accurate, then repeated measurements must be grouped around the true value. Still, accurate mean values may consist of either high or low precision. In such cases, precision limits accuracy. Precision limits resolution and reproducibility and is a useful criterion by which to assess instruments even when accuracy cannot be determined.

Resolution is a measure of the minimum detectable differences between distinct features in a size distribution. For broad, unimodal distributions, resolution is still an important concept. If the measured breadth of a distribution is meaningful, then the instrument that produces it should be able to separate narrow size peaks closer than or equal to that breadth. Otherwise the measured breadth is really an instrumental broadening effect. Generally, SPC and fractionators produce high resolution size distributions and ensemble averaging devices (light scattering and diffraction instruments) produce medium to low resolution size distributions. Resolution is a function of the signal-to-noise ratio of the instrument. Reporting more than this is like magnifying the noise; more numbers are obtained, but they are meaningless. The particle size of many APIs is typically above one µm and the size distribution is very broad and a common assertion is that resolution would seem to be unimportant. However, if the fundamental resolution of an instrument is undetermined, then one cannot really know if the broad distribution is hiding practical and possibly significant information.

Reproducibility is a measure of the variation between different machines, operators, sample preparations, etc. It becomes most important when comparing the results produced on two different machines of the same type. Such a situation is quite com- mon where multiple particle sizers of one make and model have been purchased for use in different laboratories and/or locations. It is surprising how often the resolution (expressed as a range of values) exceeds the basic precision for any one of the machines. In such cases, it is useful to have round-robin tests conducted on the same sample and, under the same set of prescribed conditions, to isolate any machine-to-machine variations. A classic example is the big differences obtained on FD instruments with high angle light scattering detectors from the same manufacturer because of evolving software variations on how best to handle the necessary light scattering (Mie) corrections.

Qualitative

In addition to quantitative specifications, there are qualitative ones that are important considerations for the purchase of any analytical instruments. These include the following:

Support: Is training, service, and applications assistance available during the installation, warranty period, and for as long as the instrument is still serviceable? An instrument might be available at a lower price from a supplier in another country but check that it comes with the expected type and level of support. Ask for references to verify any claims that are made. Ask also about any continuing program of development to ward against obsolescence.

Ease-of-Use This is a very subjective concept. Will the instrument be used by experts or by inexperienced users? Although the goal of a “one button” device is admirable, it is rarely achieved if for no other reason than sampling and sample preparation are not one-button amenable. If this concept is important then, initially, be sure to watch measurements being made – the entire process from sample preparation to clean-up.

Versatility: This is defined as the ability to measure a wide variety of samples under a wide variety of conditions. Does the instrument handle samples in air, liquids, or both? Does the instrument work with polar as well as nonpolar liquids? Does the instrument work with dilute samples or concentrates or both? Try to estimate a realistic range of sample types and the corresponding size ranges intended to be measured. Experience has shown that it is usually better to choose dedicated instruments that do a good job for their intended purpose rather than a poor job on a wide variety of samples.

Life-Cycle Cost: The basic instrument cost is only one factor to consider. The total price is best judged in terms of the life-cycle cost. This includes purchase price, operating cost, maintenance, and repair costs. Every instrument needs some type of maintenance. It may be as simple as cleaning air filters once a month; it may be as difficult as replacing mechanical parts or aligning an optical system. And every instrument will, sooner or later, require repairs. If labor is intensive, the life-cycle cost can be quite high. If special solvents or expensive environmental costs are involved, the life-cycle cost may be high enough to consider alternate choices.

Of all these qualitative considerations, support is, perhaps, the most important. When choosing between vendors of similar equipment, the one with better support may tip the scale in its favor. Do not assume that the largest vendor, or the one with the fanciest brochure, will provide the best support. Today, many companies use representatives to sell and service instruments. Just as you would choose any professional service, asking for references and getting second opinions should be an integral part of the purchase process.

Conclusion To Parts 1 and 2

Narrow down the possibilities and then make a choice

Start with Figure 1 and find the overlap of your expected size range with the various techniques that purport to measure that range. Identify techniques whose midrange covers your expected size range. Don’t know your size range? Get some preliminary measurements made but pay attention to sampling and sample preparation. The biggest mistake at this point is to choose the apparent zero-to-infinity devices.

Given the list, narrow it further by deciding if you need imaging (irregular particle shapes that correlate with end-product performance) or not, single particle counting (absolute concentration) or not, and what degree of information you require.

Now carefully consider the quantitative and qualitative specifications, giving the most weight to those aspects that pertain to your situation. While automated, high throughput instrumentation is convenient, if it sacrifices the resolution you need to make good decisions, consider carefully.

Accuracy, precision, resolution and reproducibility are functions of the size range. Errors are always greatest at the extremes. A common mistake is to check an instrument in its midrange and then proceed to use it at one or other of the extremes. Be skeptical of claims if these refer only to the average size. The average of any distribution is least subject to variation. Even instruments with poor resolution and instrument-to-instrument reproducibility can yield results with 2% precision in the average. Higher moments such as the measure of width, or skewness, are much more sensitive to uncertainties; so pay particular attention to the variance in these statistics. If it is not clear from the manufacturer’s literature then ask for clarification

Finally, before purchasing ask the vendor for a list of users who have had the instrument for at least one year. Contact them and ask for their experience with maintenance and repairs.